The boring AI infrastructure

This week in AI & marketing

Four things happened this week that all point in the same direction, and none of them came from a model launch.

Google added an “Agentic Browsing” category to Lighthouse, the audit tool most web teams already use. Android started letting Gemini build carts, book rides, and fill forms across apps from a power-button press. Stripe and American Express rolled out payment systems built specifically for AI agents, including an Amex liability framework for when agents make purchasing mistakes. And we finally got real numbers on how long it takes for a new page to appear as a citation in ChatGPT and Claude.

For marketers, this is the week the agentic web stopped being a conference panel and started being plumbing. Browser standards, mobile defaults, payment rails, citation timelines. The unglamorous parts of the stack that decide what actually becomes a requirement versus what stays a demo.

The practical question over the next few quarters is whether your site, product data, checkout flow, and content can be read and acted on by something that is not a person. Because increasingly, the first interaction a customer has with your brand will happen inside an assistant, and you will not be in the room. What represents you is your structured data, your page hierarchy, your domain authority, and whether an agent can complete a task on your site without getting stuck.

Infrastructure means this will be everywhere, all at once. It also means that you have to understand how it works. If you don’t know it, you can’t build on it. If you can’t build on it, you can’t use it. If you can’t use it, you can’t leverage it. If you can’t leverage it, others will, and well… bad luck for you.

It seems the world is ready for the agentic web. Are you?

— Torsten and Peter

PS: for the next few months, we will publish AI-Ready CMO once a week. This is a minimum schedule, but it gives us, as editors, more flexibility to provide you with additional insights when needed.

1. Google Is Quietly Standardizing the Agentic Web

Google is quietly preparing the internet for AI agents, and this might end up being more important than most flashy model launches.

Last week, developers spotted a brand-new “Agentic Browsing” category inside Google Lighthouse, the auditing tool basically every serious web team already uses. Until now, Lighthouse graded your website for humans: speed, accessibility, SEO. The new category asks a different question entirely: can an AI agent actually use your site?

Without going into the technical details, Google wants sites to expose possible actions agents can take, maintain valid llms.txt files (like robots.txt which you might be familiar with), and clean up accessibility layers so agents can reliably understand buttons, forms, and workflows. In other words: the browser is slowly becoming machine-readable infrastructure, not just a human interface.

This matters because standards in the agentic web have been messy and fragmented so far. Lots of demos, not much consensus. But once Google starts baking these ideas directly into Chrome, the field can solidify fast.

If you work in e-commerce, SaaS, travel, ticketing, or basically any workflow-heavy business, “agent readiness” is going to start sounding a lot like “mobile readiness” did fifteen years ago. Not optional. Just another thing your customers quietly expect to work.

Learn how to build your agentic team or company from our latest Teamless demo:

2. Android Is Turning Into an Agent Layer

Google is also making the previous story much more urgent by pushing Gemini directly into Android itself.

Instead of opening ChatGPT or Gemini as separate destinations, Android is becoming the agent layer. Long-press the power button and Gemini can build grocery carts, reserve parking, reorder food, book rides, summarize webpages, or fill out forms across apps.

Your customer increasingly won’t “browse” your website the way humans historically did. Their agent will compare products, fill forms, navigate checkout flows, and potentially make decisions before a human even sees your landing page. Just watch their video: it is eye-opening:

And yes, there are still rough edges. Privacy concerns are obvious. User trust will take time. Most people won’t hand over full autonomy overnight. But some companies will make the mistake of assuming that means this shift is slow. It won’t be.

Learn how to build your agentic workflow from our latest Teamless demo:

3. Stripe and Amex Are Building the Trust Layer for AI Commerce

One missing puzzle piece for agentic commerce has always been payments.

That’s changing too. Both Stripe and American Express rolled out systems that let AI agents securely transact on your behalf without exposing raw payment credentials. Stripe’s upgraded Link wallet can generate one-time-use cards or shared payment tokens for agents. American Express went even further with “Agent Purchase Protection,” where Amex explicitly backs certain agent-caused purchasing mistakes.

Read that again because it’s easy to miss how weird this is: financial infrastructure companies are now designing liability frameworks for autonomous software agents.

The interesting question for us is what happens when discovery, comparison, checkout, and even repurchasing get compressed into a mostly invisible machine-to-machine workflow. Brand still matters. Trust still matters. But the actual interaction surface may increasingly happen inside an assistant instead of on your homepage.

4. AI Citation Timelines Finally Have Real Numbers

There’s a surprisingly interesting new data point around AI search visibility.

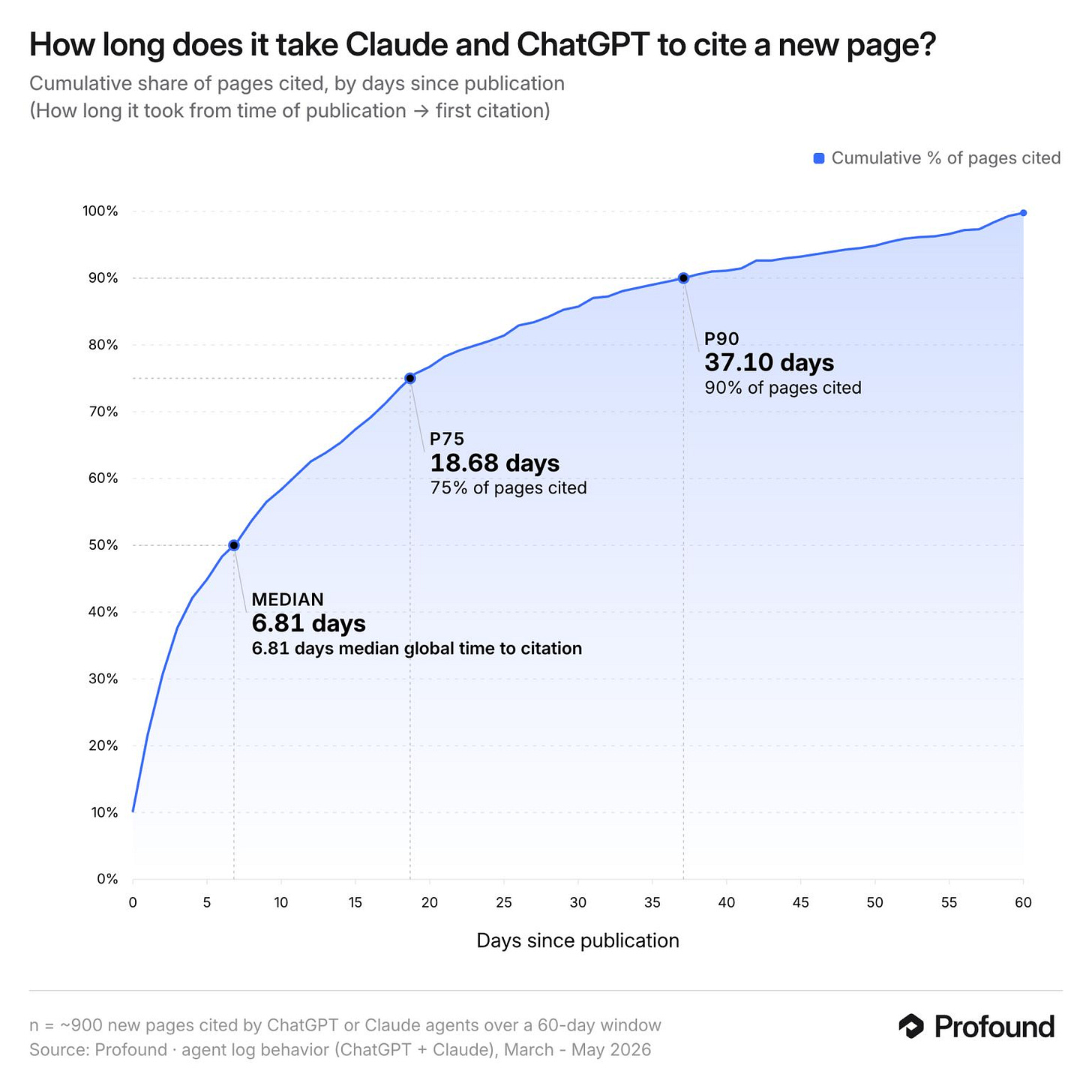

Josh Blyskal from Profound published early research tracking how long it takes newly published pages to get cited inside systems like ChatGPT and Claude. Median time to first citation: 6.8 days. Seventy-five percent of pages got cited within about 19 days. Ninety percent within 37 days.

That’s interesting partly because it contradicts some earlier “AI indexing happens almost instantly” narratives floating around LinkedIn.

The likely reality is that there are two separate clocks: crawlability and retrieval. Once your content is fully indexed and visible, models can surface it fast. But getting into those discovery systems reliably still depends on old-fashioned fundamentals: crawlability, authority, clean site structure, semantic clarity, and whether your content is actually useful enough to retrieve for a real query.

So what should you actually do with this?

Use Lighthouse to make sure your site is readable for LLMs. Publish under trusted domains whenever possible. Structure pages clearly with concrete answers, strong headings, and clear signals of expertise. Remember, AI visibility is not some magical new discipline completely detached from good web publishing practices. Some companies are looking for secret “AEO hacks” while their sitemap is broken and half their product data lives inside PDFs.

5. Google’s New Video Model Might Follow the Nano Banana Playbook

Finally, something lighter: Google’s upcoming Gemini Omni video model is starting to leak ahead of I/O, and it looks really good:

Generation quality may not yet fully match the top frontier models, but editing and conversational control seem extremely strong. Users are reportedly remixing clips, swapping objects, rewriting scenes via chat, and iterating interactively rather than treating video generation as a one-shot trial-and-error prompt.

That’s probably the more important direction anyway. Most marketers do not actually need Hollywood-grade video generation every day. We need fast iteration, editing, adaptation, localization, resizing, versioning, and campaign tweaking without opening twelve different tools.

We switched heavily toward Nano Banana internally because it quietly became the most useful image workflow model, not necessarily the prettiest benchmark demo. Google may be running the exact same playbook for video now.

Share with a colleague. Earn paid membership months.