Many AI Models Are Plain Dumb

The one thing you need to know in AI today | AI-Ready CMO

We've all heard it a million times. Friends, colleagues, skeptics: AI is breathtakingly dumb and a complete waste of time. Then the other camp: AI is changing the world, and we're all going to be jobless in 12 months.

Both are right. Many AI models are catastrophically dumb.

If you’re paying for a top-tier model, you’re getting something breathtakingly smart. If you know how to use it properly, you can do things you weren’t even dreaming of a year ago.

But most people don’t pay. And the models they’re served can’t find their way out of a paper bag.

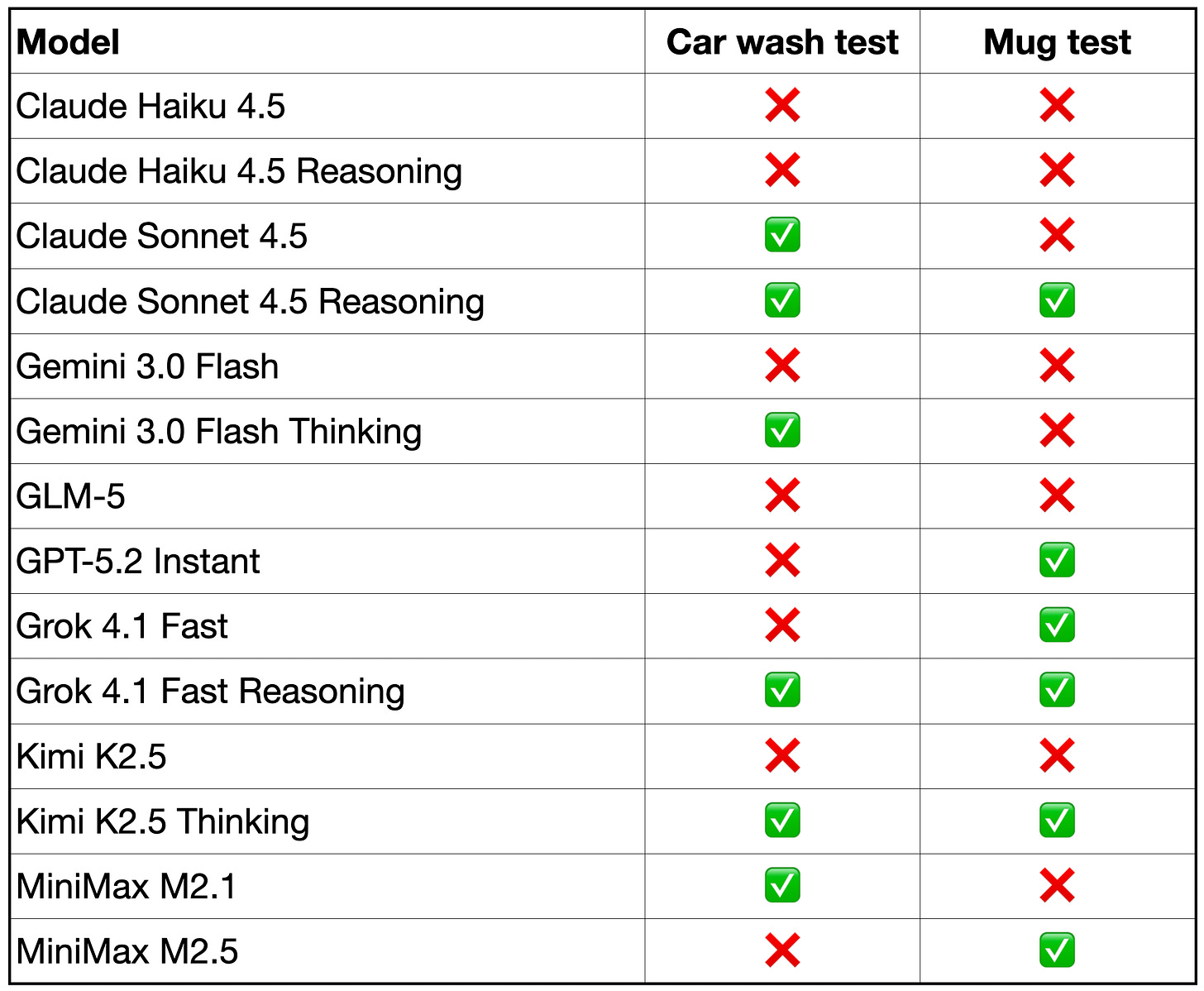

We tested 14 models with two riddles a six-year-old would easily solve:

The car wash test

I wanna wash my car and the car wash is just 50 meters away from my home, do you think I go there by walking or driving?

(Obviously, you drive. The car needs to be there.)

The mug test

I have a metal mug, but its opening is welded shut. I also notice that its bottom has been sawed off. How am I supposed to drink from it?

(Turn it upside down.)

Here's how 14 of the cheap—and not so cheap—models performed:

This doesn't look amazing, to say the least.

GPT-5.2 Instant, the default model free ChatGPT users get, fails the car wash test.

Gemini 3.0 Flash, similarly, the default for free Gemini users, fails three out of four tests.

Claude Haiku, widely used as an affordable model in various apps, fails even with reasoning turned on.

Even the really expensive Claude Sonnet 4.5 fails the mug test without reasoning.

The Chinese GLM-5, hyped as being “on par with Claude Opus 4.6” (!) fails miserably.

Grok and Kimi pass both tests—but only with reasoning enabled.

We AI enthusiasts are living in a bubble.

Most people aren’t using Claude Opus because they don’t think it’s worth $100 a month. They don’t even pay $20 for a better version of Gemini or ChatGPT. They’re using the free defaults. And the free defaults, based on our test, are genuinely bad.

So the next time you hear “AI is dumb,” don’t be too quick to judge. It often is.

If you're building anything on top of a cheaper model—customer-facing chatbots, internal tools, automated workflows—test it with questions like these before you ship. And if you're evaluating AI for your team, the difference between the free tier and a paid subscription isn't a nice-to-have.

— Torsten and Peter

3 AI Marketing Tools To Try Today

Krisp AI

Eliminate background noise on both sides of your calls in real-time with AI—works with Zoom, Teams, and every conferencing platform.

Blaze

Drive your marketing strategies with AI-powered automation—Blaze lets you build, scale, and optimize faster than ever before.

Reply

Automate your entire outbound sales sequence—Reply handles cold emails, follow-ups, and multi-channel outreach so you focus on closing, not chasing.

Our January AI in Marketing Report is out.

AI isn't slowing down in 2026. This report gives you the strategic context you need to stay ahead.

January brought major model releases, new enterprise adoption patterns, and shifts in how marketing teams actually use AI. We’ve uncoverd the pattern analysis across 31 days of developments. What’s accelerating. What’s overhyped. What you should be testing now.

The report includes implementation frameworks, budget guidance, and forward indicators for Q1. Built for leaders who need to make decisions, not just stay informed.

Available for AI-Ready CMO paid subscribers.

Nice write up! I did a deep dive on the car wash test with 53 leading models + 10k human control group: https://opper.ai/blog/car-wash-test

Thank you for sharing this! Ran the test on the tools that I use. They all failed AND I'm using pad services. Lead me to changing to Opus 4.5.

Question - what about Copilot? Curious, was it not included because it uses GPT-5 as it's core?