ByteDance Trained a Video Model on TikTok's DNA. The Results Are Breathtaking.

The one thing you need to know in AI today | AI-Ready CMO

At the end of the day, AI models are only as good as the data they're trained on. Now imagine what happens when the company sitting on every TikTok video ever uploaded decides to build a video model.

You don't have to imagine anymore.

Seedance 2.0 from ByteDance generates multi-shot cinematic video with synchronized audio from a single text prompt in under 60 seconds. Not just a single clip but multiple connected scenes with consistent characters, lighting, camera work, and native dialogue. It is spectacular:

Character consistency has been the biggest unsolved problem in AI video for the longest time. You could get a stunning single shot, but the moment you needed the same character across multiple scenes, everything fell apart. Different face, different outfit, different vibe. Seedance 2.0 maintains facial features, clothing details, and visual style across an entire multi-shot sequence generated from a single prompt:

And then there's the audio. Seedance generates dialogue, ambient noise, and foley effects (footsteps, cloth rustling, impacts) as part of the same process, with lip-sync in over eight languages. It also accepts up to 12 reference inputs—images, video clips, audio—so you can feed it a character photo, a music track, and a text description, and get something coherent back. YouTubers have already been going wild with it, producing everything from anime fight scenes to satirical Rick and Morty episodes that sound disturbingly close to the real thing:

And our favorite example, Seedance 2.0 can also be used to generate product launch videos with smooth animations:

One red flag: the model is sometimes creepy. During testing, a Chinese tech journalist discovered that uploading a facial photo caused the model to generate audio nearly identical to the person's real voice—without any voice samples or authorization. ByteDance quickly suspended that capability and introduced live verification steps, but the fact that it worked at all tells you where this technology is heading. Oh, and unlike Sora 2 and Google's Veo 3.1, Seedance output is completely watermark-free. Make of that what you will.

Six months ago, AI video was a novelty—fun to play with, mostly unusable for real work. Today, you have at least three models (Sora 2, Veo 3.1, Seedance 2.0) that produce broadcast-adjacent quality, and each one leapfrogs the last within weeks.

If you haven’t experimented with them yet, now is the time.

— Torsten and Peter

3 AI Marketing Tools To Try Today

AdCreative

Generate high-converting ad creatives in seconds—AdCreative AI produces hundreds of variations optimized for performance.

Descript

Edit video and audio like a document—Descript removes filler words, fixes mistakes, and generates transcripts for faster content production.

Synthesia

Turn text into professional video presentations instantly—Synthesia generates AI presenters in 120+ languages, eliminating production delays and costs.

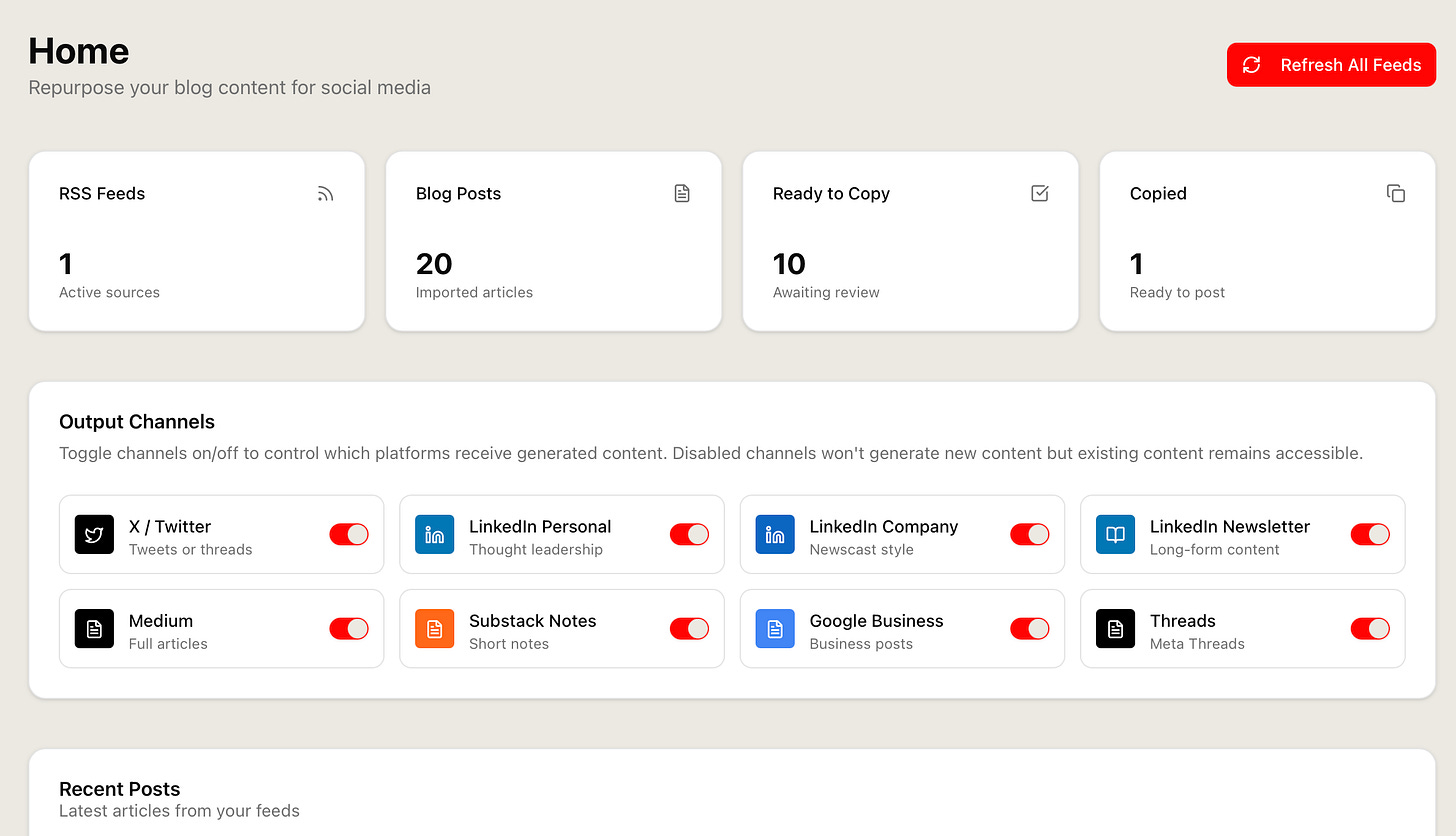

Turn Your RSS Into Platform-Specific Content.

You published a 2,000-word article. Now you need social posts to promote it—but summarizing long-form into platform-ready content takes as long as writing the original piece.

The Content Repurposer converts RSS feeds and long-form posts into platform-specific social content. Drop in your blog URL, get optimized posts for LinkedIn, X, Threads, and Substack Notes—each adapted for character limits, platform tone, and engagement patterns.

One article. Multiple distribution channels. Let AI handle the translation from long-form to social.

Available for AI-Ready CMO paid subscribers.